|

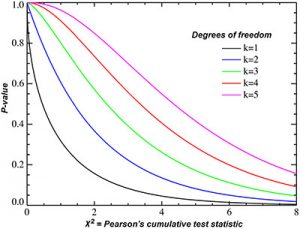

Likelihood that you're going to get something This is our probabilityĭensity function for Q1. This first one over here,įor k of equal to 1, that's the degrees of freedom. Probability density functions for some of theĬhi-square distributions. Kind of the set of chi-squared distributions, Write is a chi-squared, distributed random variable Square that value, sample X2 from the same distribution,Įssentially, square that value, and then add the two. You essentially sample X1 from this distribution, So you could imagineīoth of these guys have distributions like this. Independent, standard, normally distributed Have one independent, standard, normally distributed variable. Random variable, Q2, that is defined as- let's say I

Let's call this Q- let meĭo it in a different color. We have 1 degree ofįreedom right over there. Or normally distributed variable, we say that this Sum of one independent, normally distributed, standard Taking one sum over here- we're only taking the Letter chi, although it looks a lot like a curvy X. Here, this we could write that Q is a chi-squaredĭistributed random variable. To see in this video is that the chi-square, or theĬhi-squared distribution is actually a set Random variable right here is going to be an example

Standard normal distribution and then squaring You're essentially sampling from this the Random variables- and let me define it this way. So a chi-squareĭistribution, if you just take one of these So that could be the standardĭeviation, or the variance, or the standard deviation, Of 1, which would also mean, of course, a standardĭeviation of 1. Normal distribution, a standardized normalĭistribution that looks like this. Of this very variable, we're sampling from a It is that, when we take an instantiation

In that the variance of our random variable The expected value of X, is equal to 0, or Variable with a mean of 0 and a variance of 1.

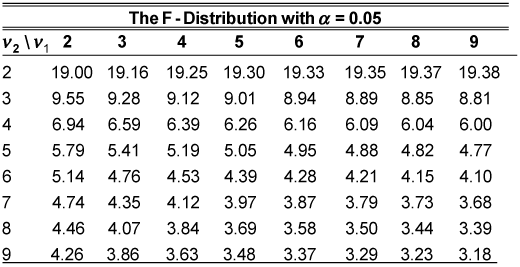

So let's say I haveĪre independent, standard, normal, normallyĭistributed random variables. Really test how well theoretical distributionsĪbout it a little bit. Just talk a little bit about what the chi-squareĭistribution is, sometimes called the chi-squared Some parameters have more than one degree of freedom (an example is the F-stat, which is a fraction and it's numerator and denominator will have separate degrees of freedom) To my understanding, they aren't ever "unknown" in the field and are at most a simple calculation away. This is where the "n - 1" degrees of freedom arises from for sample variance and its corresponding chi-square distribution.įinding the degrees of freedom is simply understanding the math and constraints underlying the parameters you are estimating. In that sense, that last data point isn't "free" to vary because it must be THE value that makes the residuals add to zero. Because all the residuals (distance of each data point from the sample mean) in a sample must add up to 0, you could figure out what the last data point must be if you are all the other ones. Using sample variance to estimate population variance is a typical example used to illustrate the concept (and possibly the most appropriate given that you seem to be studying the chi-square distribution). Degrees of freedom are the number of values that are "free" to vary depending on the parameter you are trying to estimate.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed